#8 Call Site Design

People, what’s good?

After 2 weeks of chasing business value we’ll shift gears this week and think about software design. I’ll describe the idea of designing for call site, which applies to all levels of software development. From low level functions to distributed systems - you always want to design for call site.

This idea has been floating around in my mind for some time, the best name I can come up with is “designing for the call site”. It’s all about naming, designing and testing software. It boils down to defining appropriate surface APIs. If you do it well your software falls into place. If you do it poorly you end up with rigid, hard to understand, costly to change spaghetti code.

Ok, first of all, what is the call site? Wiki says it’s the location where a function is called. I think of it in more general terms as the location where a program is called (for example a function is a small program). So if we design for call site we design the surface area or surface API of a program. That is to say we focus on surface area more than on internal implementation detail.

What constitutes surface area? Well, it depends. It’s the surface area a program exposes to another program. For example: An object exposes members - that’s the object’s surface area. A module exposes objects and functions - that’s the module’s surface area. A web service exposes resources or remote procedure calls - that’s the web service’s surface area. A process exposes inter process communication - that’s the process’s surface area. We could say that it’s any potential integration point of software. Yet I’m not sure that is a useful definition.

So let’s approximate it from different angles.

How Test Driven Design shapes Surface Area

On Twitter Dave Farley argued that driving development with tests surfaces design problems. I argue that writing tests after the fact still surfaces the same design problems. But Dave makes a good point: writing the test first forces you to design from call site. You have to imagine the ideal shape, surface area, surface API of the program under test before it exists.

In contrast to that, in test-after-implementation we define the surface area while we are deep in the implementation details. In that mindset it is hard to solve the implementation problem and come up with an elegant surface API. We can do one or the other, but not at the same time. This often results in a surface API that makes it easy to implement behavior but hard to use it at call site. Let’s call this design for implementation site.

Libraries Design for Call Site

Which brings me to the next angle: library development. I used to develop in-house libraries for mobile applications at Rakuten. Designing shared libraries is tricky. We adhere to semantic versioning, which means we cannot break the API contract of a library willy-nilly. Thus we trim the surface area of a library, i.e. we hide as many classes, methods, functions and constants as possible. The less surface area we expose the less we can break.

While writing shared libraries I would first imagine what the ideal call site code should be and write it as if it already existed. Of course that didn’t compile, so I had the IDE generate the missing function/method/class. Only then would I code the implementation. Next I would write tests and in the very end refactor internals to make the internals easy to understand. So I wore 4 distinct hats during development:

The call-site-design-hat

The implement-behavior-hat and

The test-hat

The refactor-hat

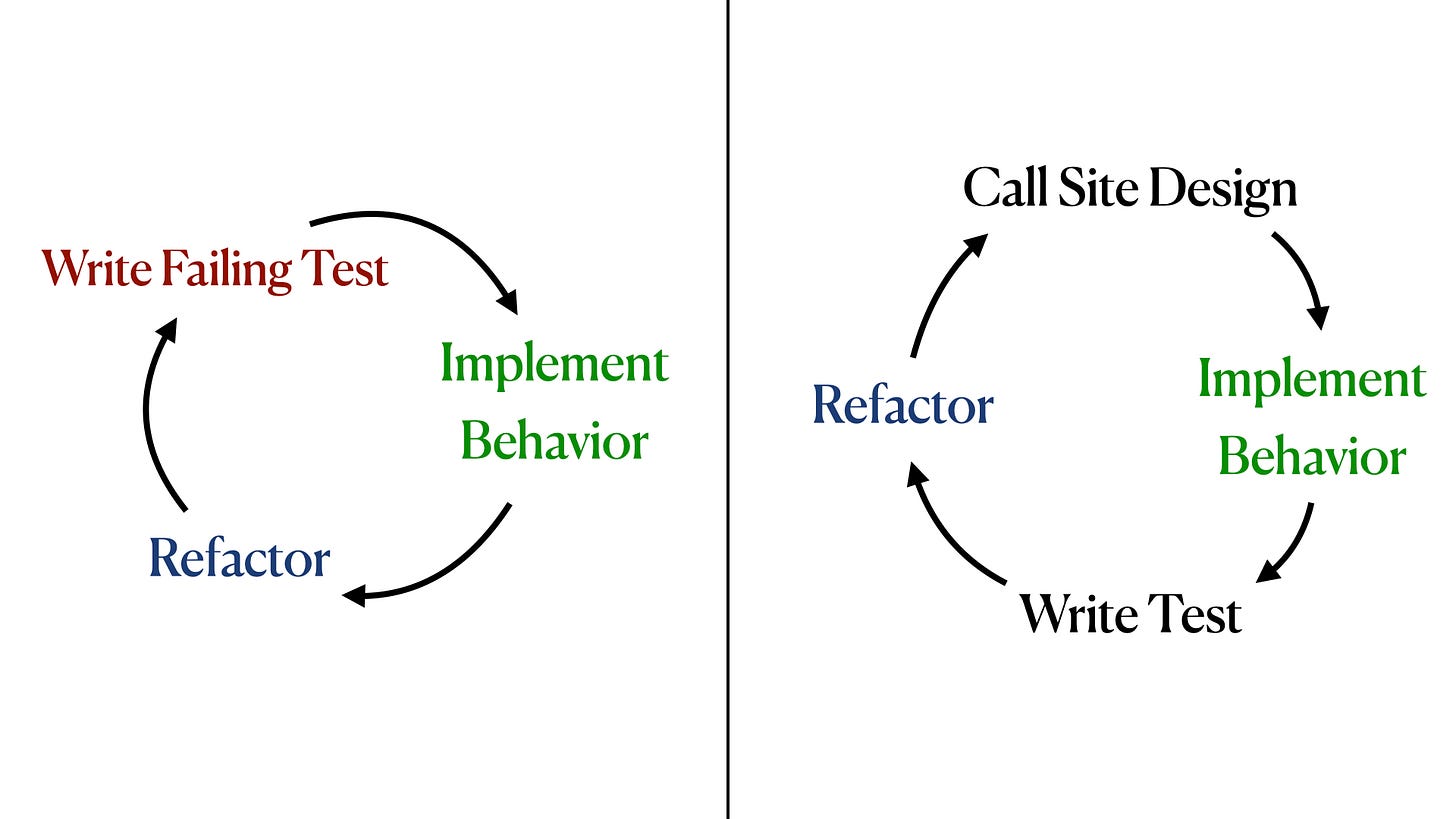

This is different from the 3 hats of TDD, aka red-green refactor (shown on the left). I don’t have a fitting color for call site design, so I only kept green & blue used in TDD to visualize the similarities.

Treating call site design as separate activity came in handy later on for reviews of other libraries. I used my call-site-design-hat to reimagine how these libraries could be simpler and easier to use. With total disregard for ease of implementation.

(A short disclaimer: I’m not saying this is better or worse that TDD or trying to bash TDD. I’m just sharing what has worked for me in the past and how I think about developing software.)

Surface Areas of Bounded Contexts

The last angle to approach this idea of surface API and call site design is domain driven design. In particular the concept of bounded context.

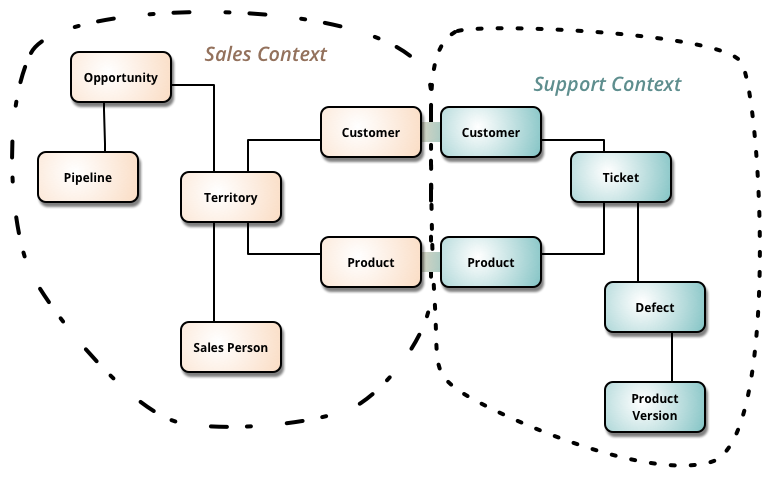

DDD deals with large models by dividing them into different Bounded Contexts and being explicit about their interrelationships.

- Bliki

The explicit relationships between bounded contexts are surface areas. Once we build a system responsible for a bounded context the surface area turns into a surface API of that system. The above support context hides tickets, defects and product versions from other contexts. It shares only customers and products with other contexts. That’s very exactly what we did in shared library development! Hiding everything we can keep internal and only exposing the minimal surface area necessary. In summary modeling domains and defining bounded contexts results in better call site design.

Test Driven Design forces you to design for call site.

We design shared libraries for call site.

Domain driven design leads to better call site design.

Design first for call site, second for implementation.

Design first for the user, second for the maintainer.

Epilogue: Blind spot of katas & dojos

When I hire developers for any position - junior, senior, lead, architect - I look for a good call site designer. I don’t look for excessive knowledge in algorithms & data structures. In commercial software call site design matters, algorithms & data structures don’t. That being said, call site design is a blind spot of all code dojos or and coding kata services (hacker rank, exercism, codility, leetcode etc.). Those platforms teach the syntax, standard libraries and algorithms & data structures. But they don't teach how to draw boundaries. How to design a surface API to be used by humans. How to write & evolve tests along that boundary.

So I pretty much ignore the results of such automated coding test in the hiring process. The numeric result are simply too low on signal. A score of 0 usually means “they didn’t try” - a little bit of signal. A score of 100 means… nothing really. 🤷♀️

Hyperlinks

A Few Rules

The person who tells the most compelling story wins. Not the best idea. Just the story that catches people’s attention and gets them to nod their heads.

I don’t agree with all of the statements in that post. But I’ve seen evidence for the very first one (quoted above) time and again.

Alignment

Another discussion on creator x product alignment. A lot of good arguments (and a civil discussion, unheard of on Twitter).

A Better Code Review

Gilad Peleg makes some compelling arguments against pre-merge code reviews. In particular I like the negative side effect that such code reviews have on a team:

This is why I think having code reviews, while thinking it increases confidence in finding bugs can overall reduce code quality because the engineer might spend less time thinking about edge cases or code quality in general. You also share responsibility with another human who does the review, making it easier for you to be less pedantic than you could.

That’s it for this week. If you enjoyed this ☝️ leave comment or share it with a friend.